New Page indexing issues detected

The indexing of web pages is a vital aspect of search engine optimization (SEO), as it determines whether a page appears in search results. However, encountering indexing issues can hinder a website’s visibility and organic traffic potential. In this article, we will explore common new page indexing issues, their implications, and effective strategies to solve them. By understanding and addressing these challenges, you can ensure that your web pages are properly indexed, maximizing their chances of ranking high in search engine results.

Understanding New Page Indexing Issues

When launching new web pages, it’s essential to be aware of potential indexing challenges. One common issue is search engines failing to discover the new pages. This occurs when search engine crawlers are unable to locate and analyze the content. Another issue is slow indexing, where search engines take longer than expected to process and include the new pages in their index. These problems can hinder your website’s visibility, leading to missed opportunities for organic traffic and reduced search engine rankings.

Who has the most subscribers on youtube In the vast realm of online video, YouTube stands as the unrivaled titan, home to a myriad of creators amassing legions of subscribers. In this article, we dive into the fascinating world of YouTube subscriber counts, unveiling the channels that have claimed the coveted titles of the most subscribed on this platform.

Causes and Implications of New Page Indexing Issues

Several factors can contribute to new page indexing problems. Poor internal linking structures, complex URL structures, or blocked access due to robots.txt directives may prevent search engine crawlers from finding and indexing your new pages. Additionally, duplicate content issues, such as multiple URLs pointing to the same content, can confuse search engines and result in poor indexing. When new pages aren’t indexed promptly, it hampers their visibility, decreases organic traffic potential, and makes it difficult for users to find relevant information on your website.

Strategies to Solve New Page Indexing Issues

Optimize Internal Linking: Ensure a logical and well-structured internal linking system. Use descriptive anchor text to link to new pages from existing content, making it easier for search engines to discover and index them.

Submit XML Sitemap: Create and submit an XML sitemap to search engines, which provides a comprehensive list of all pages on your website. This helps search engine crawlers identify and index new pages more efficiently.

Check Robots.txt: Review your robots.txt file to ensure it doesn’t unintentionally block search engines from accessing and indexing your new pages. Modify the file if necessary to grant access to relevant content.

Implement Canonical Tags: Use canonical tags to specify the preferred URL for your content, particularly when dealing with duplicate pages. This helps search engines understand which version of the page to index and display in search results.

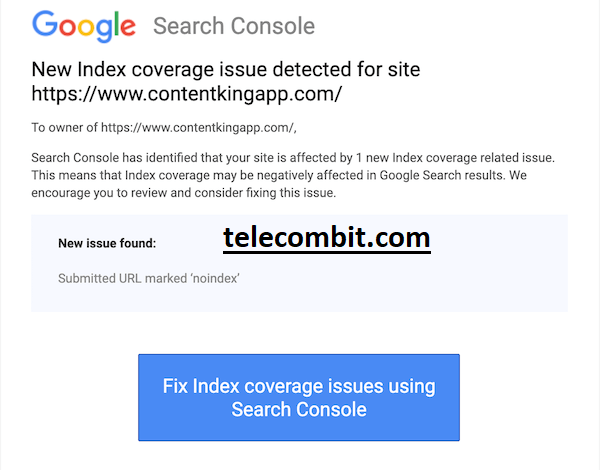

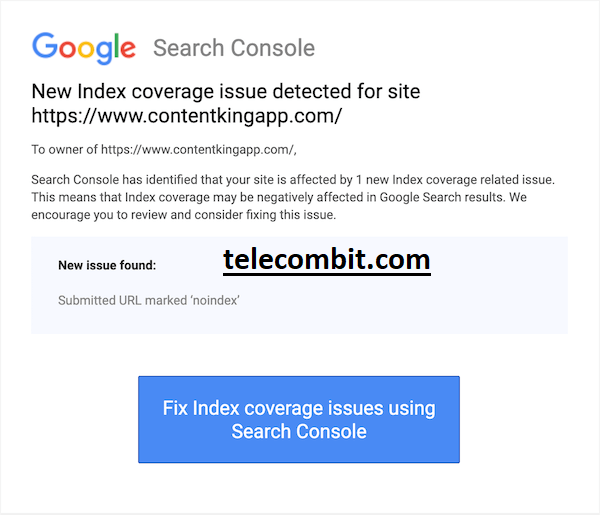

Fetch as Google: Utilize the “Fetch as Google” feature in Google Search Console to prompt search engine crawlers to index specific pages. This tool enables you to submit URLs for crawling, expediting the indexing process.

Monitoring and Optimization

After implementing the above strategies, it’s crucial to monitor the indexing status of your new pages. Regularly check indexation using tools like Google Search Console and monitor organic traffic trends. If issues persist, review and refine your strategies, or seek professional assistance from SEO experts.

You can also learn about: Commission Based Sales Jobs

Conclusion:

Ensuring proper indexing of new web pages is essential for maximizing organic visibility and attracting targeted traffic. By understanding the common indexing issues, their causes, and implementing effective solutions, you can overcome these challenges and improve your website’s search engine rankings. Remember to optimize internal linking, submit XML sitemaps, review robots.txt, implement canonical tags, and utilize tools like “Fetch as Google” for faster and more efficient indexing. By addressing new page indexing issues proactively, you can enhance your website’s visibility, attract more organic traffic, and ultimately achieve your SEO goals.